Xingtu Liu

About

I am an incoming PhD student in statistics and data science at the University of California, Los Angeles. I obtained my Master’s degree in computing science at Simon Fraser University and my Bachelor’s degree in mathematics at the University of Waterloo. In the past, I was very fortunate to be advised by Sharan Vaswani and Gautam Kamath. I am interested in building theoretical and algorithmic foundations for reinforcement learning and machine learning, with a current focus on continuous-time RL. My name is pronounced shin-two.

My name is pronounced shin-two.

Google Scholar; Twitter.

Contact

rltheory@outlook.com

Selected work (ALL Papers)

Sample Complexity Bounds for Linear Constrained MDPs with a Generative Model.

Xingtu Liu, Lin F. Yang, Sharan Vaswani.

International Conference on Algorithmic Learning Theory (ALT), 2026.

NeurIPS 2025 Workshop on Constrained Optimization for Machine Learning.

Improved Rates for Differentially Private Stochastic Convex Optimization with Heavy-Tailed Data.

Gautam Kamath*, Xingtu Liu*, Huanyu Zhang*.

International Conference on Machine Learning (ICML), 2022.

Oral Presentation (2.1% Acceptance Rate).

Teaching

Teaching Assistant (SFU)

CMPT 409/981 – Optimization for Machine Learning (Fall 2025)

CMPT 210 – Probability and Computing (Summer 2025)

CMPT 120 – Introduction to Computing Science and Programming (Spring 2024, Spring 2025)

MACM 101 – Discrete Mathematics (Fall 2023)

SERVICE

Conference Reviewer: ICML 2025, NeurIPS (2024-2025), ICLR (2025-2026), AISTATS (2022-2024), RLC 2025, IJCNN 2025

Journal Reviewer: TMLR

Workshop Reviewer: NeurIPS-OPT 2025

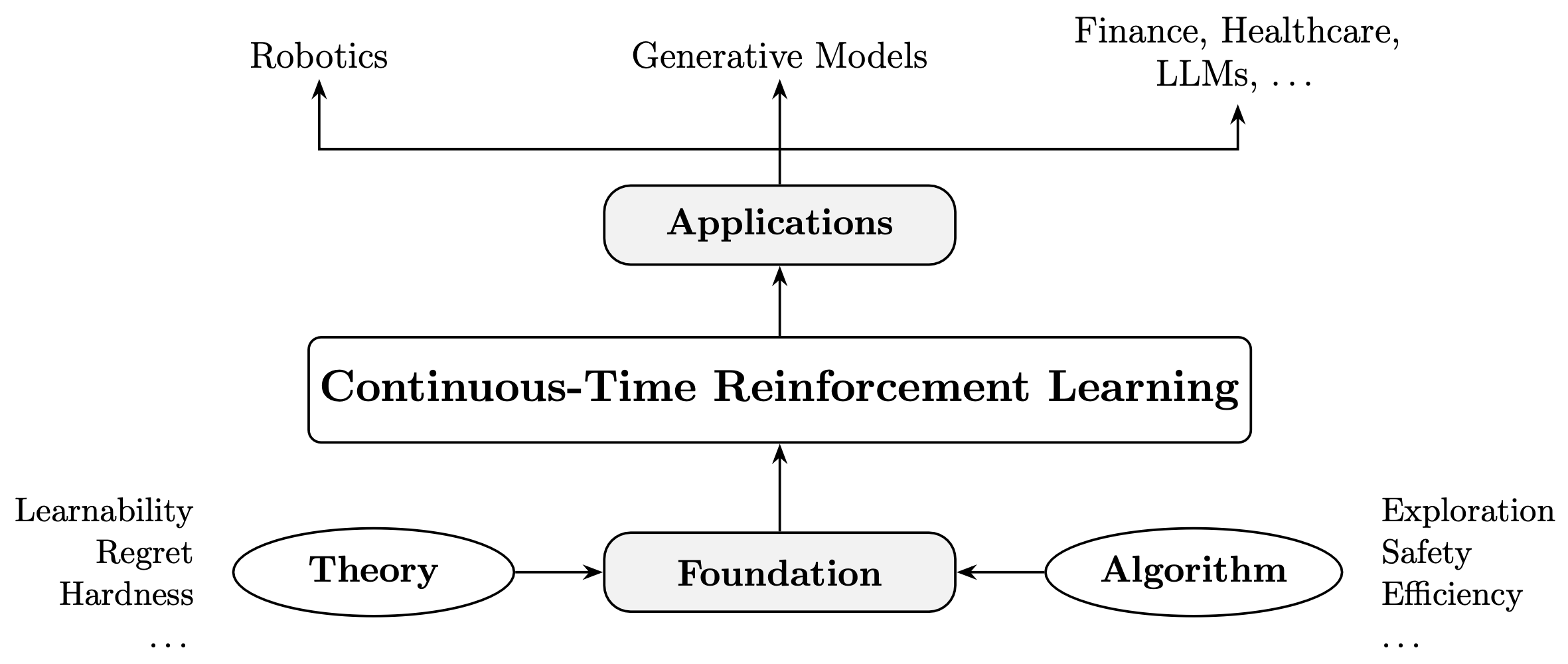

Research Overview

Why Continuous-Time Reinforcement Learning?

In practice, we need to better understand learning and decision-making in the physical world.

In theory, we need to bridge the gap between RL and stochastic control (see, e.g., Recht, 2019).